Secure AI Development & MLOps Platform

A fully air-gapped desktop environment for data science, model training, RAG pipelines, and AI agent development — built for environments where data cannot leave the machine

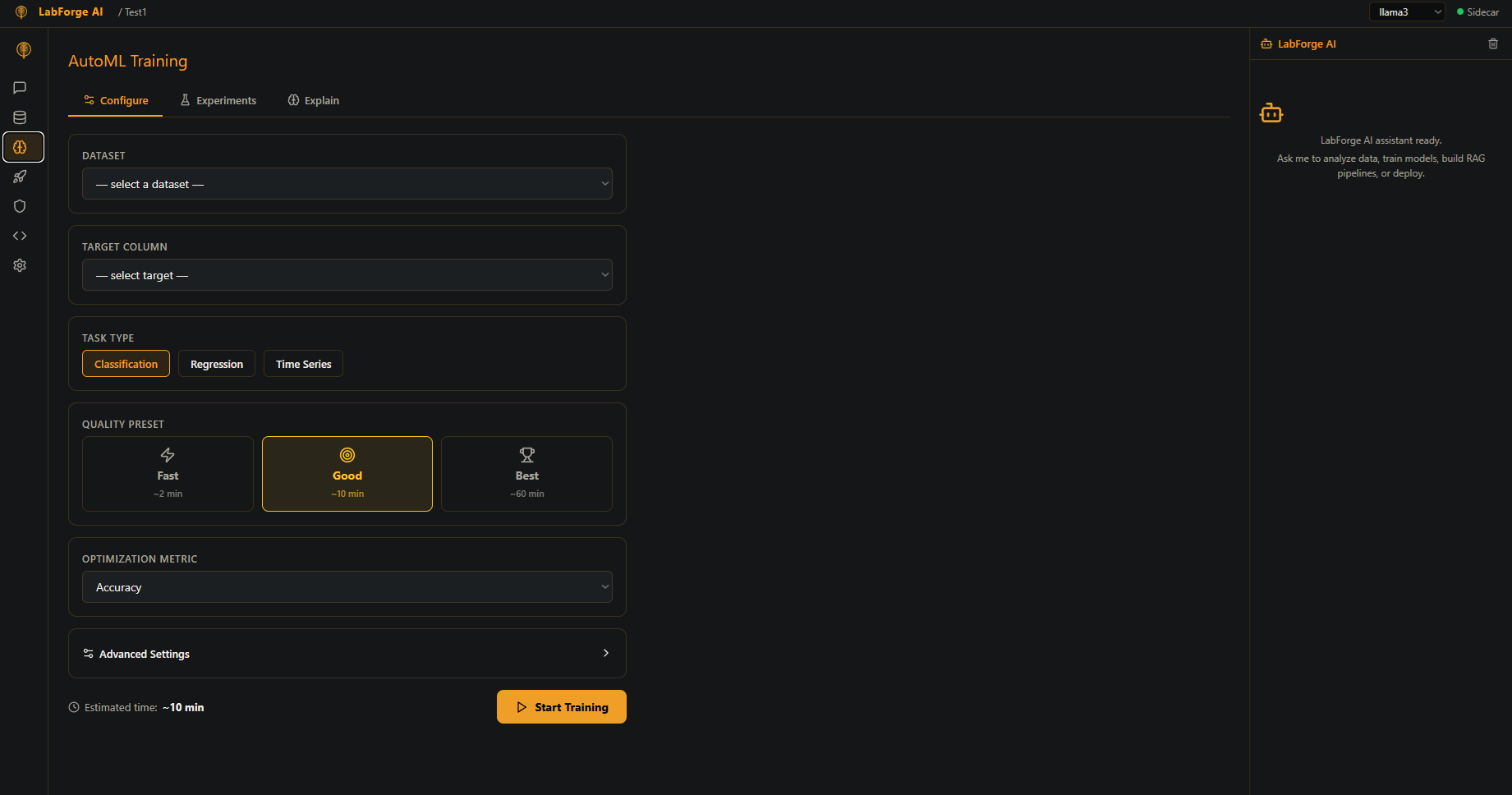

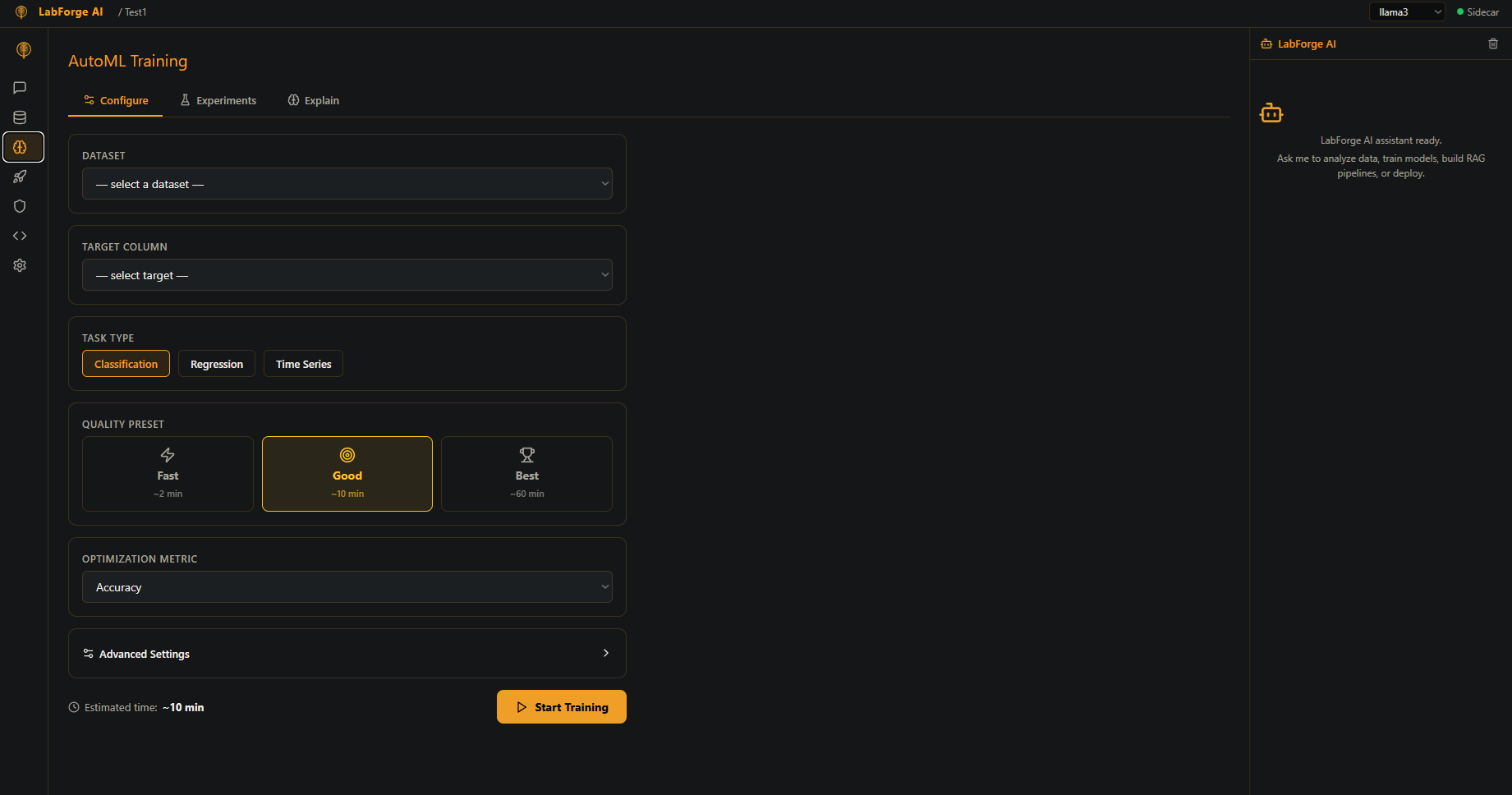

AutoGluon-powered automated model training with MLflow experiment tracking and SHAP explainability

Ollama-backed chat with Llama 3, Mistral, and CodeLlama — RAG-enabled document retrieval

Zero network dependencies. SBOM generation, vulnerability scanning, and artifact signing built in

Every capability your team needs — from data ingestion to production deployment — in a single air-gapped desktop application

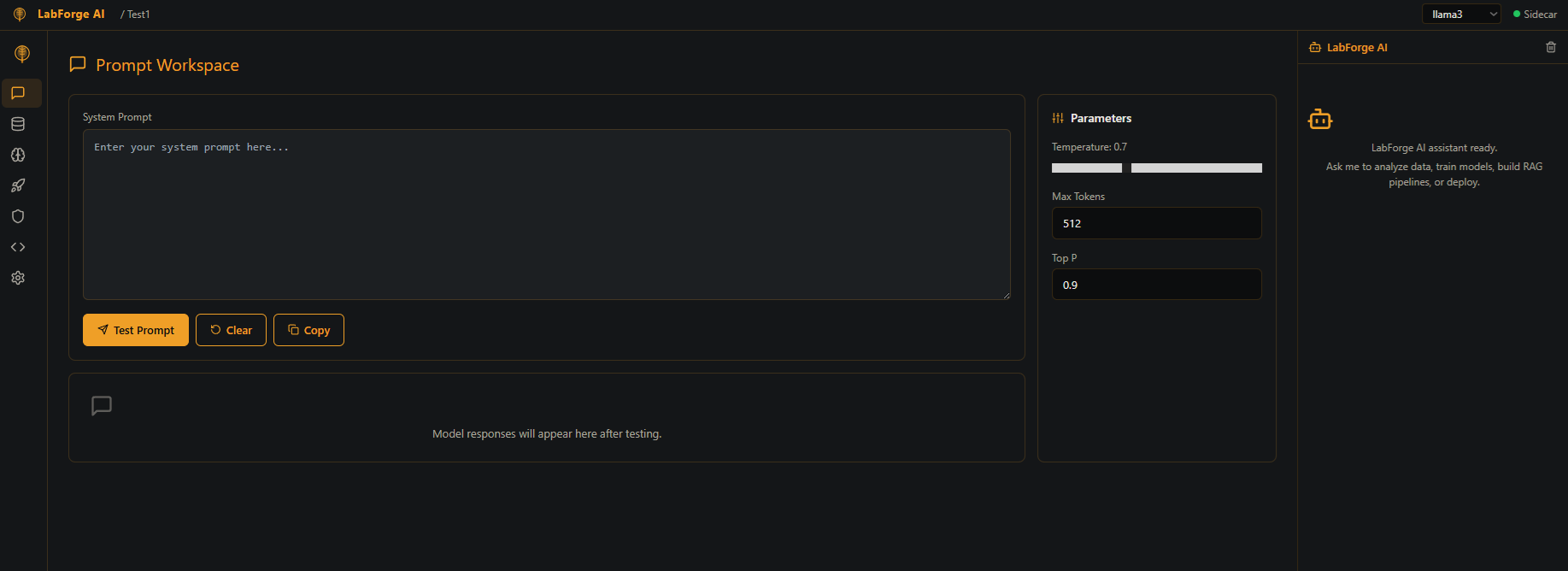

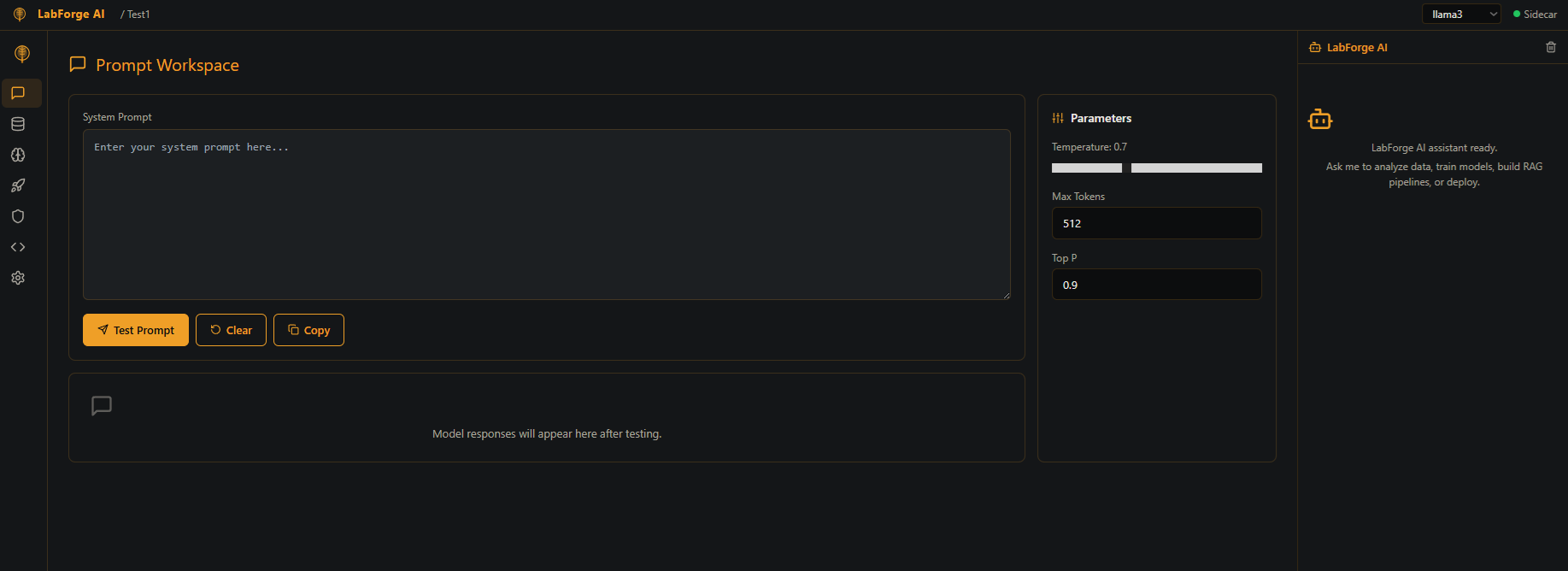

Conversational AI interface powered by local Ollama models. Send prompts, get responses, and test model behavior in a clean workspace. Supports synchronous and streaming (SSE) responses.

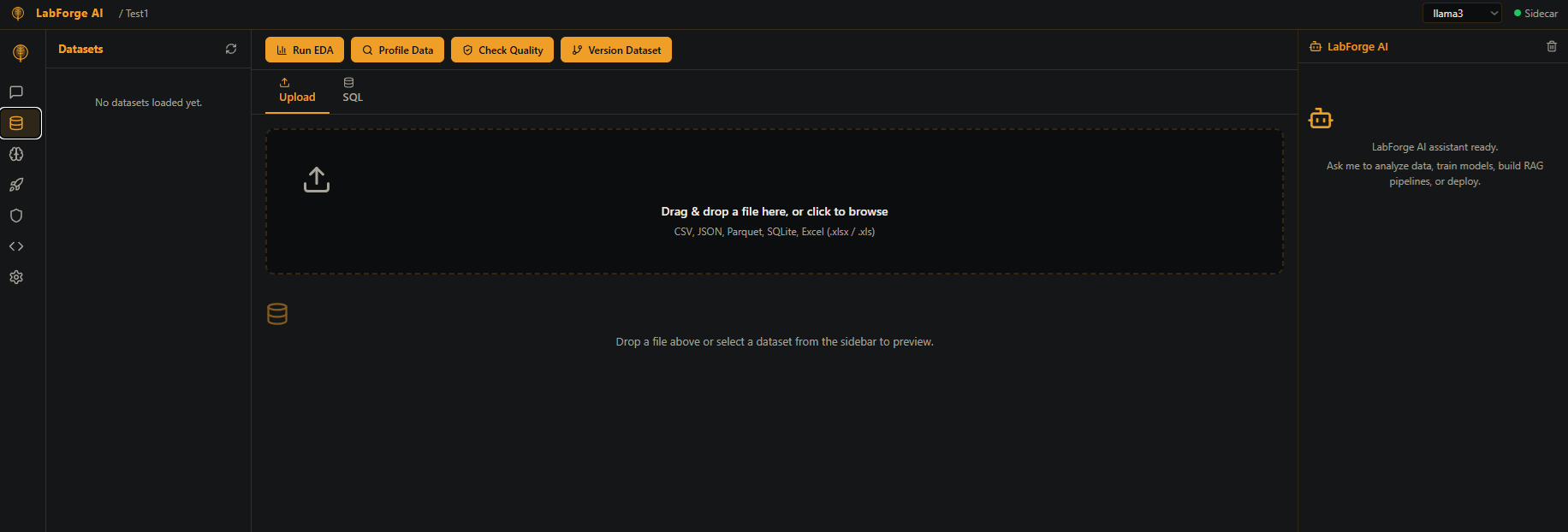

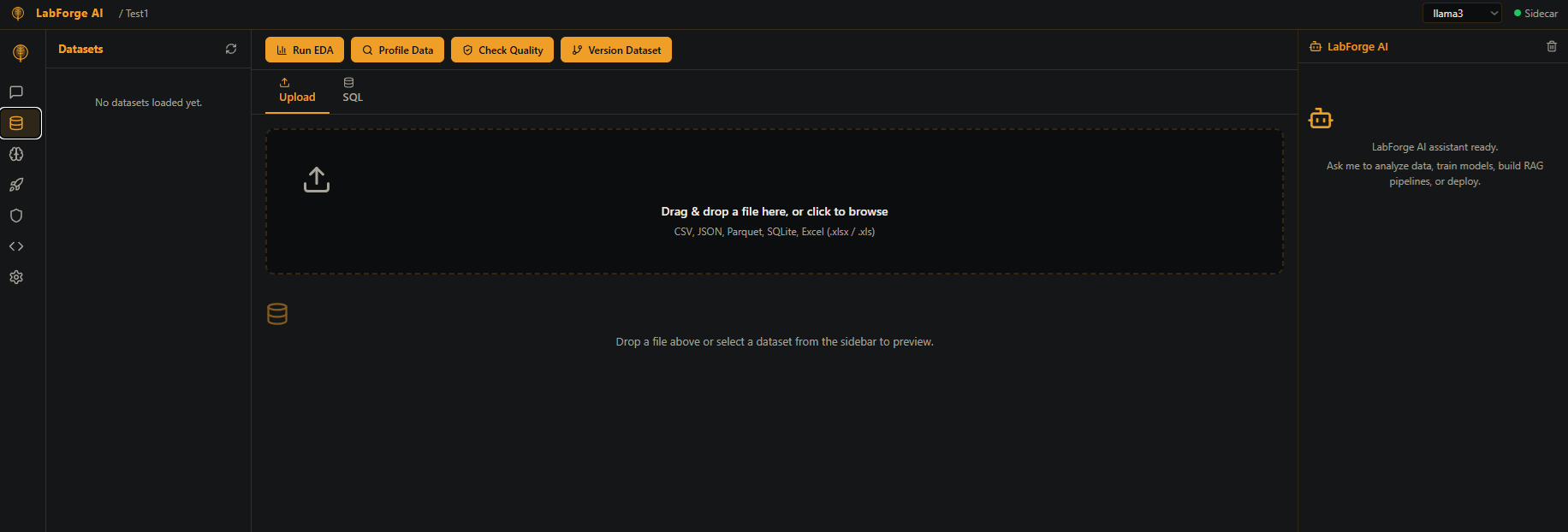

Data ingestion and exploratory analysis. Upload CSV and Excel datasets, run automated EDA, quality checks, and statistical profiling. Prepare datasets for training pipelines.

No-code model training pipeline. Select a dataset, choose a target column, pick a quality preset, and launch AutoML training. Tracks runs with MLflow integration.

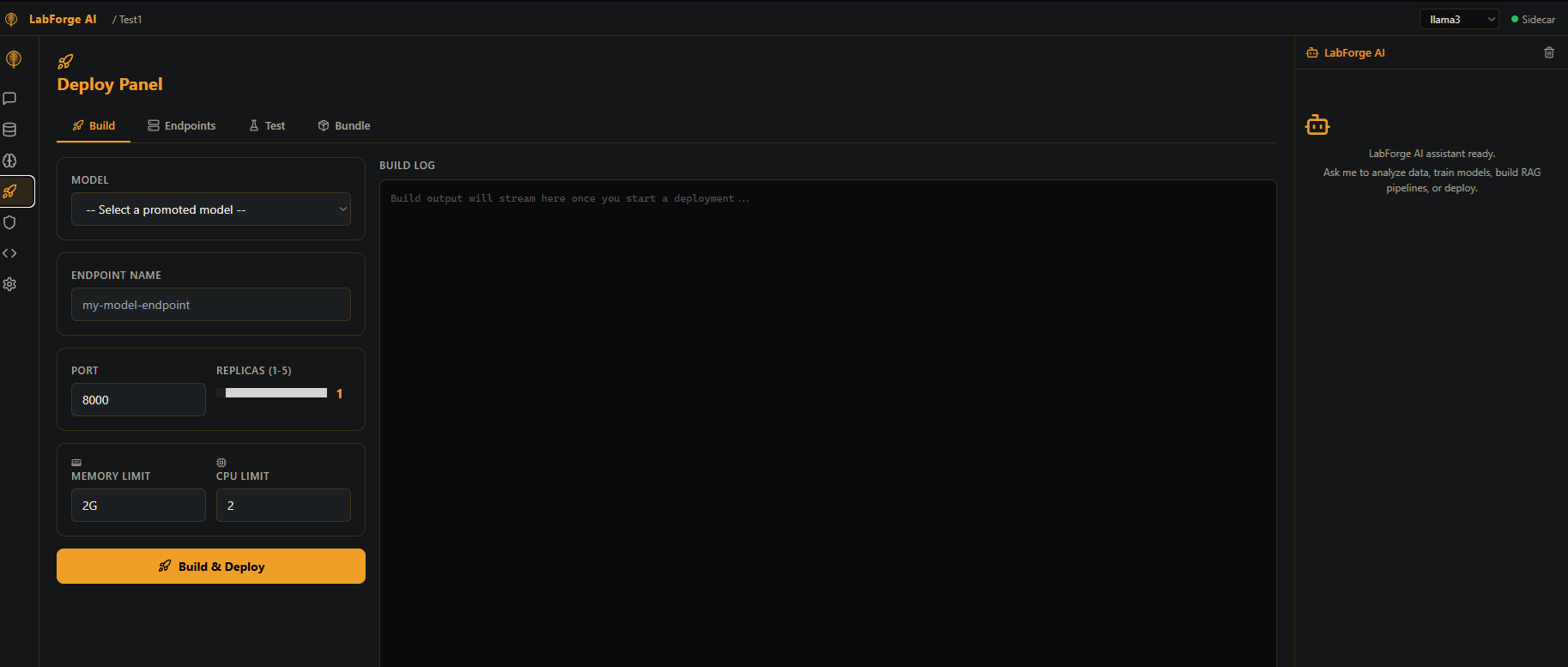

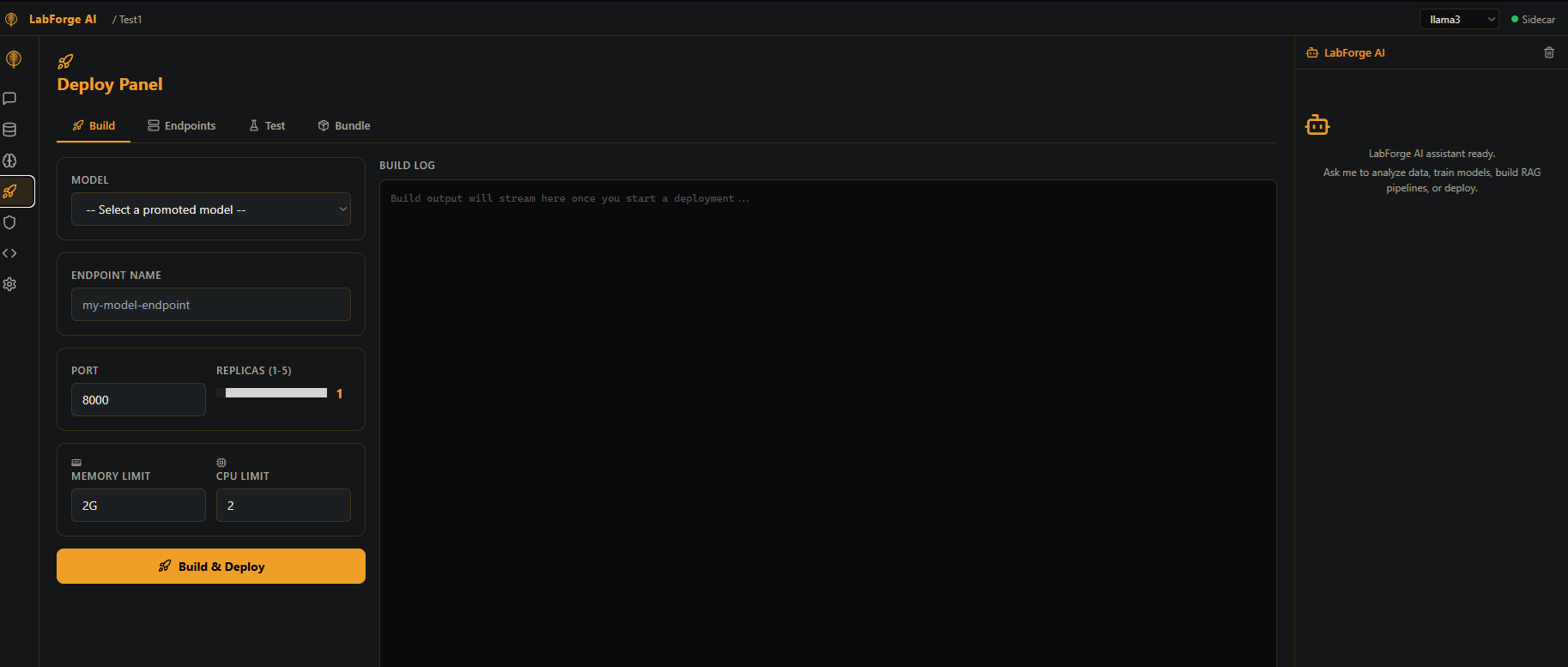

Model deployment manager. Promote trained model runs to live endpoints via BentoML and Podman. Monitor deployment health and manage active endpoints.

Air-gapped security dashboard. SBOM generation, vulnerability scanning, compliance status, and ML-DSA-65 post-quantum license verification. Built for CMMC/RMF environments.

AI-assisted code generation and editing. Submit natural language requests, get runnable code back. Integrated with local Ollama models — no cloud required.

Platform configuration. Model selector, sidecar port config, user password management, software update checker, and system status display.

ML-DSA-65 (CRYSTALS-Dilithium) license protection — NIST FIPS 204 compliant post-quantum digital signatures

Zero internet required after install. All AI inference, training, and deployment runs entirely on-premise with no external calls

Llama 3, Qwen 2.5 Coder, and compatible open-source models — chat, code generation, and RAG all powered locally

Drag-and-drop ReactFlow workflow builder to connect data ingestion, training, and deployment stages visually

Document ingestion, ChromaDB vector storage, LangChain retrieval, and FlashRank reranking for secure document Q&A

Build autonomous AI agent workflows and deploy them as containerized microservices via Podman — no cloud orchestrator needed

ML development on classified networks with zero data exfiltration risk

NIST-compliant AI/ML workflows with built-in SBOM and vulnerability management

Train models on sensitive patient or research data without cloud exposure

Full ML lifecycle from EDA to deployment in a single desktop application

Develop, train, and deploy ML models without leaving your secure environment

Request Demo